Confusion Metrics, Accuracy, Precision, Recall, F1-Score

Table Of Contents:

- Confusion Metrics

- Accuracy

- Precision

- Recall

- F1-Score

- Specificity (True Negative Rate)

- Fall-Out : False Positive Rate (FPR)

- Miss Rate: False Negative Rate (FNR)

- Balanced Accuracy

- Example Of Confusion Metrics

- Confusion Metrics For Multiple Class

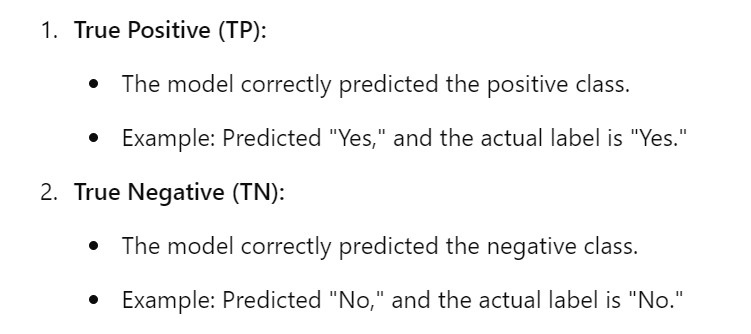

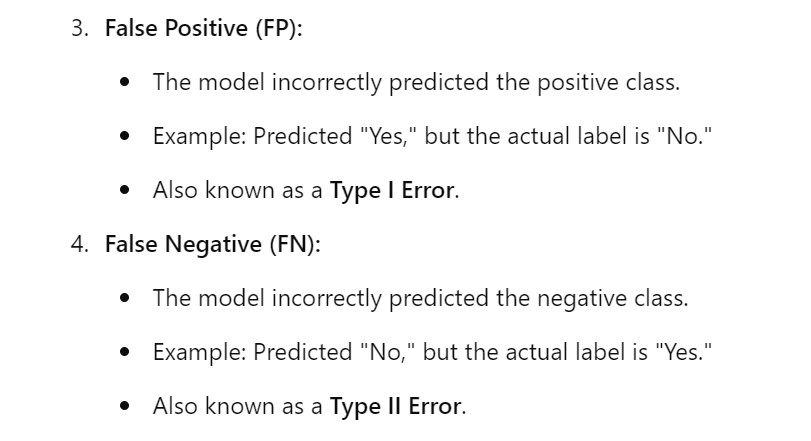

(1) Confusion Metrics

- A confusion matrix is a tabular representation of the performance of a classification model.

- It helps visualize and understand how well a model’s predictions align with the actual outcomes, especially in binary and multi-class classification.

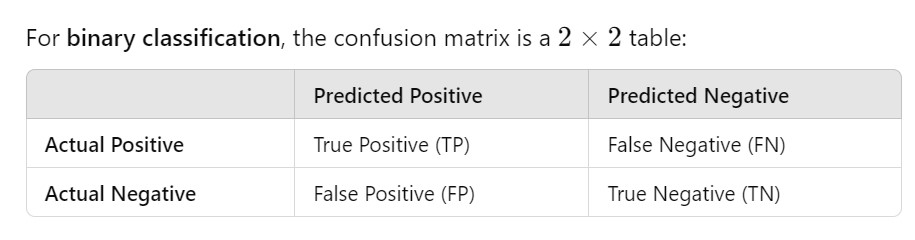

(2) Structure Of Confusion Metrics

Definition:

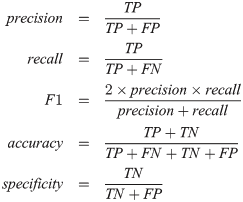

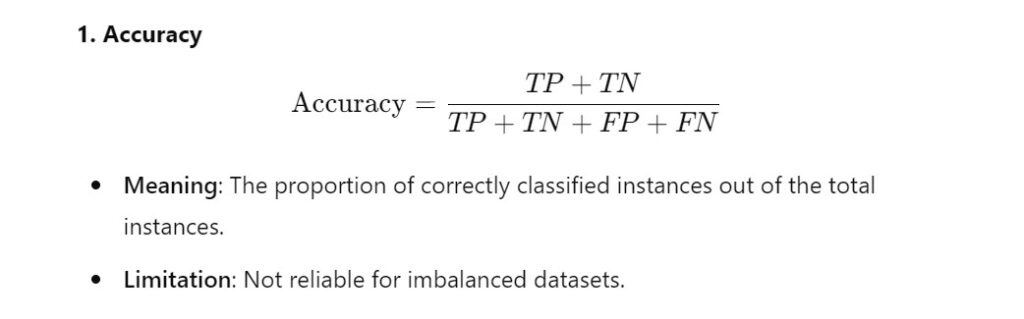

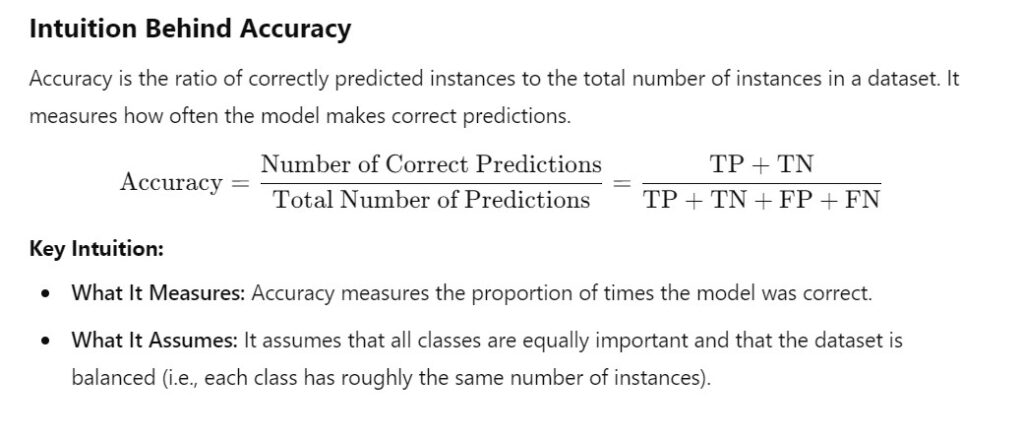

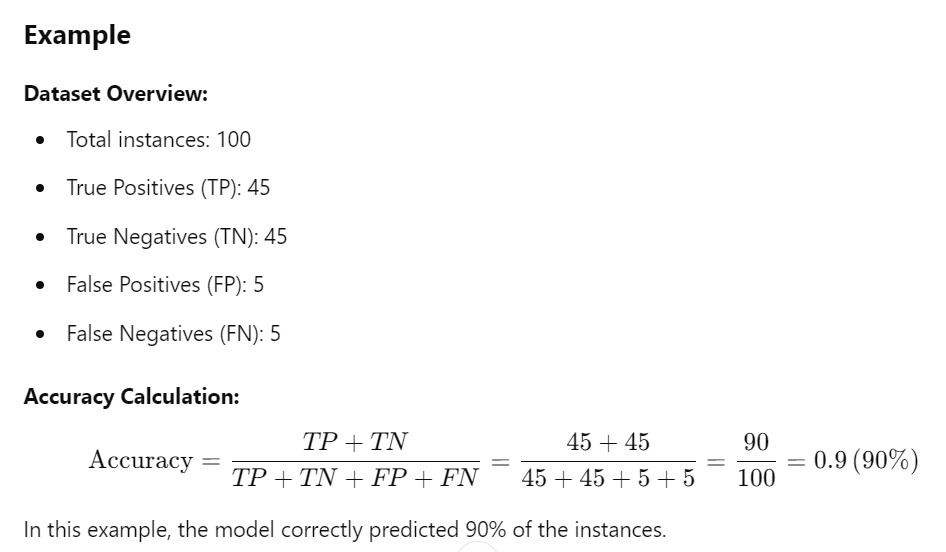

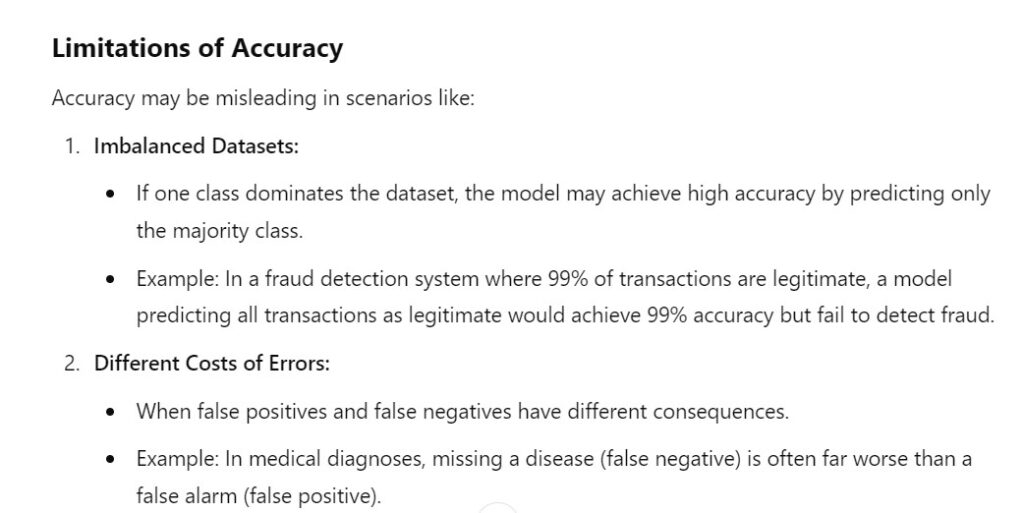

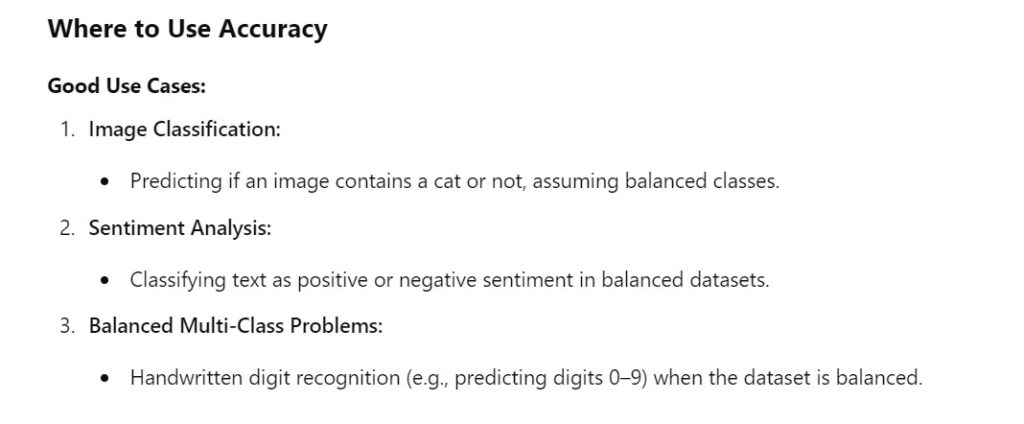

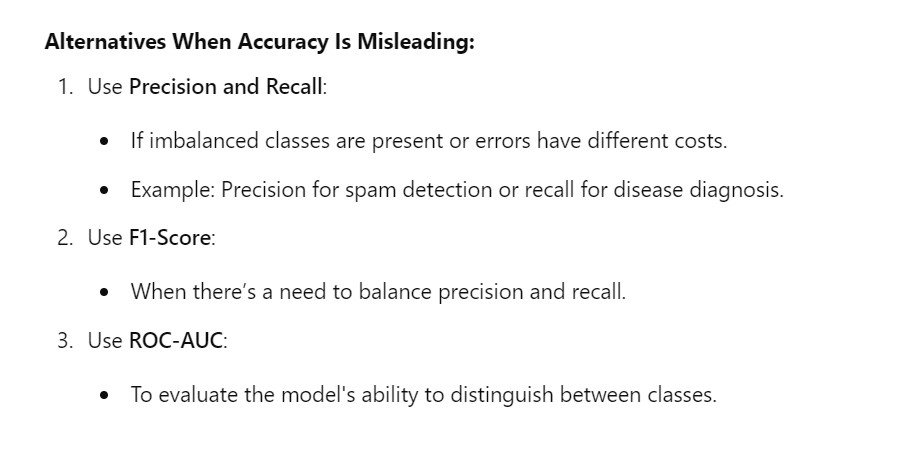

(3) Accuracy

- For imbalanced dataset Accuracy will give the wrong impression about the model performance. We can get 95% Accuracy but we have misclassified other classes.

- Accuracy gives equal weightage to all the classes, because in the denominator we always divide the total value, whether it is a higher False Positive or higher False Negative the Accuracy does not takes into focus on them.

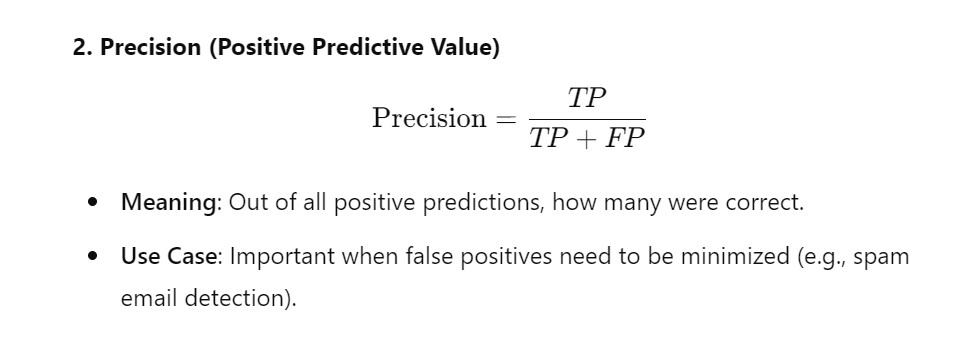

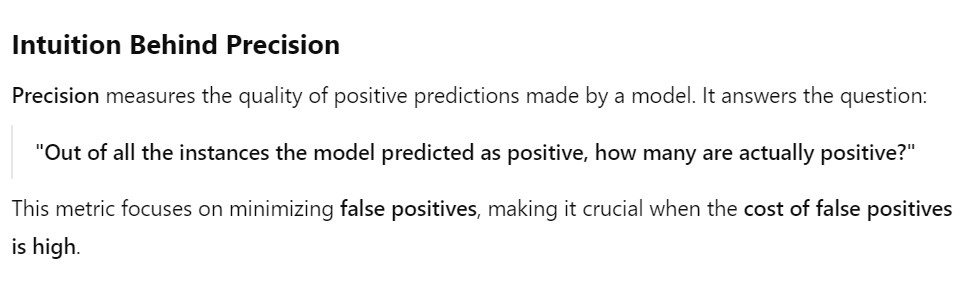

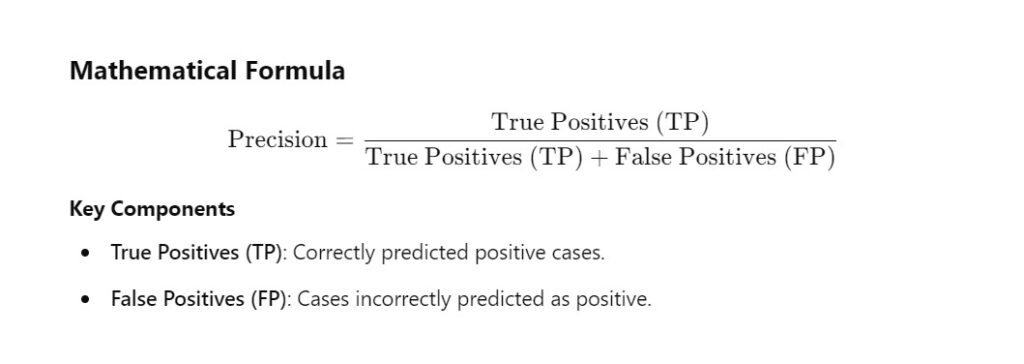

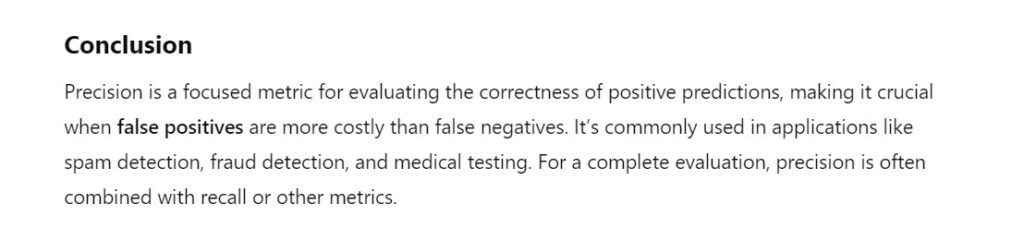

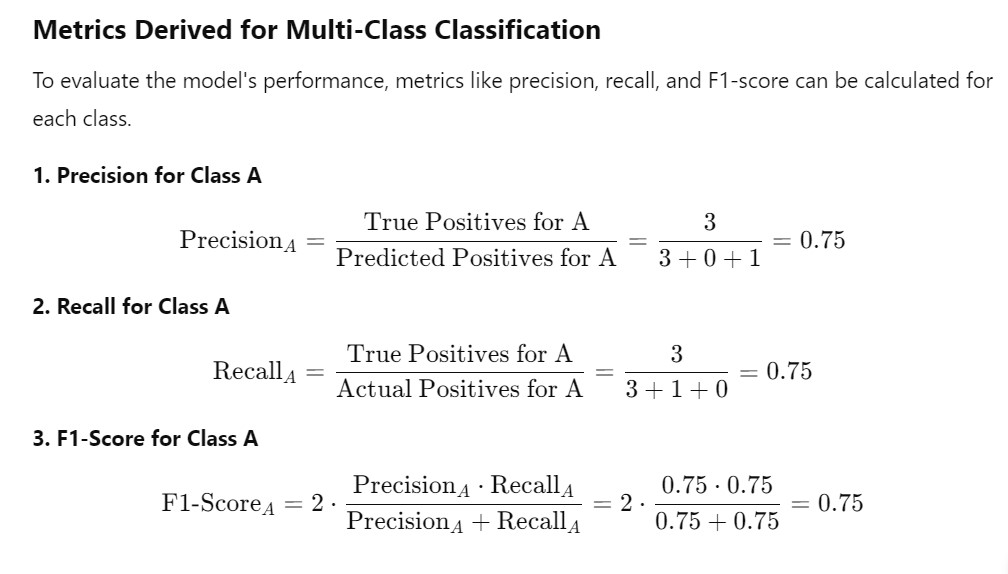

(4) Precision (Positive Predictive Value)

- Precision is used when we want to focus on one class of data only.

- In precision we focus on only positive classes.

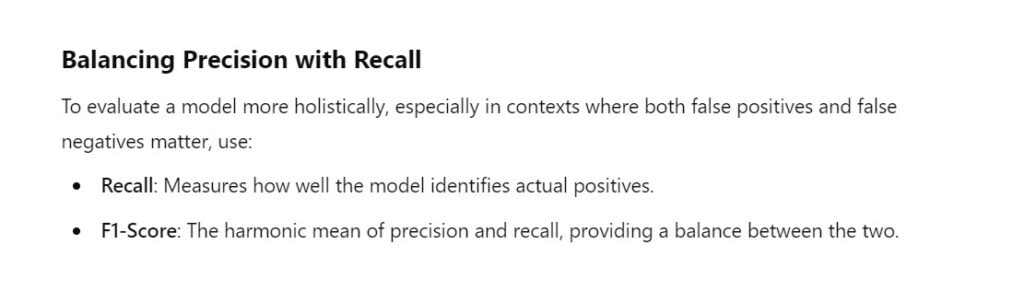

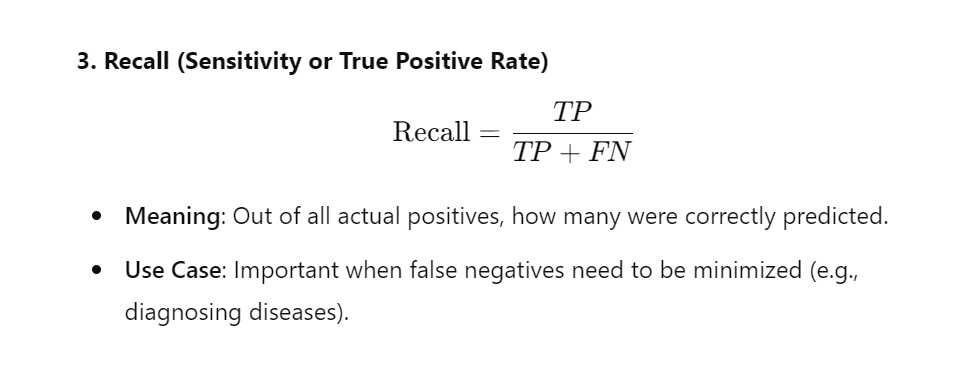

(5) Recall (Sensitivity or True Positive Rate)

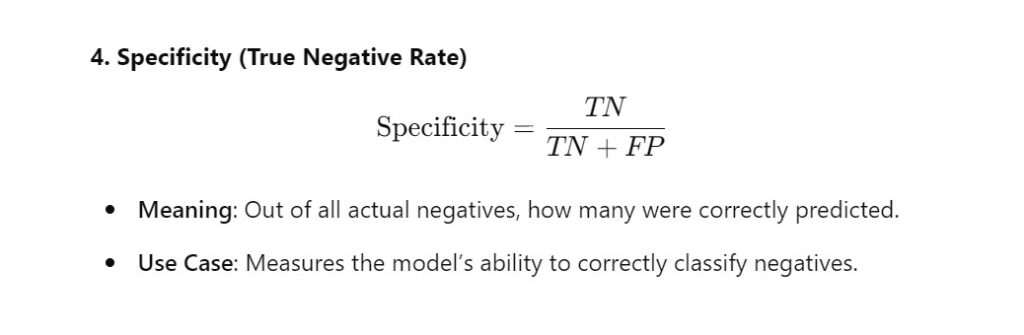

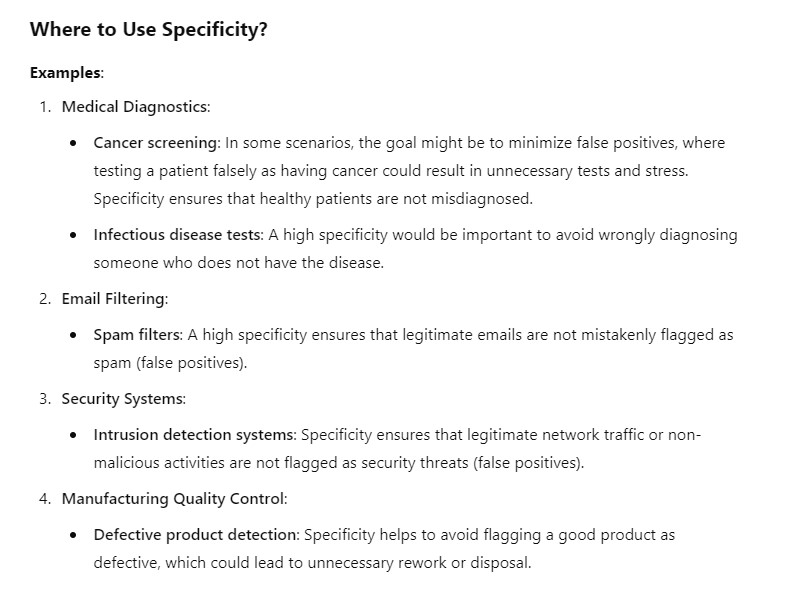

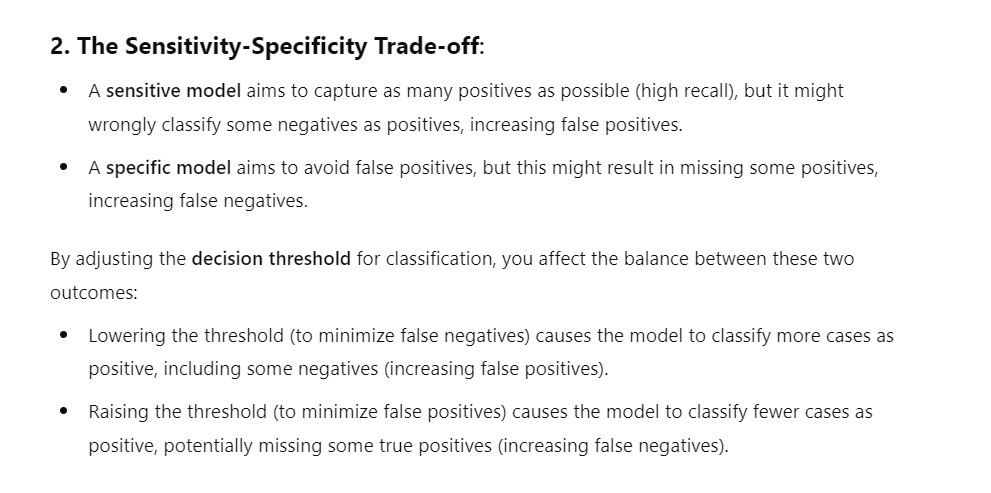

(6) Specificity (True Negative Rate)

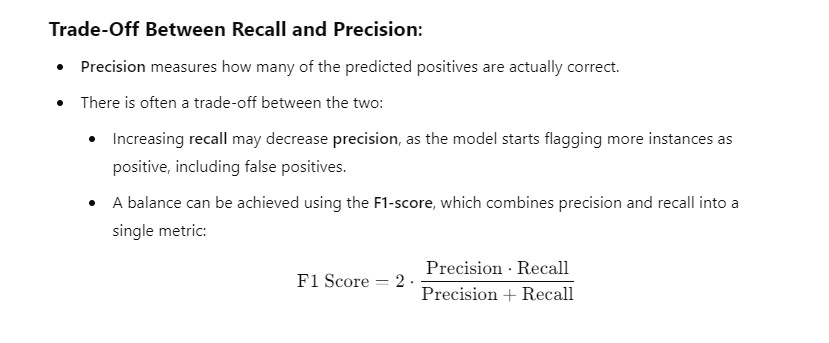

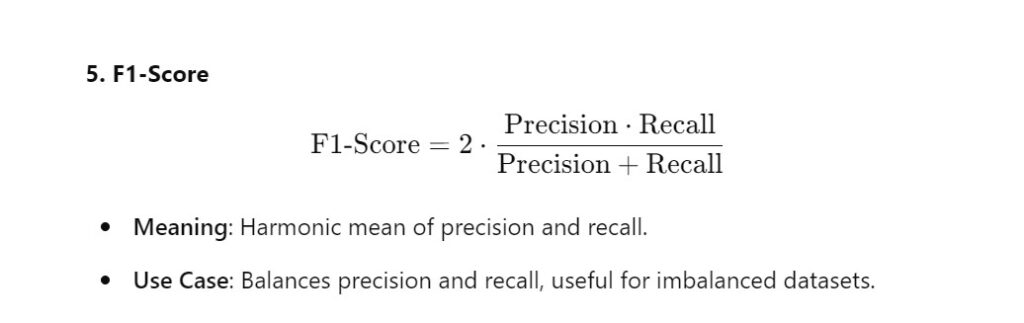

(7) F1-Score

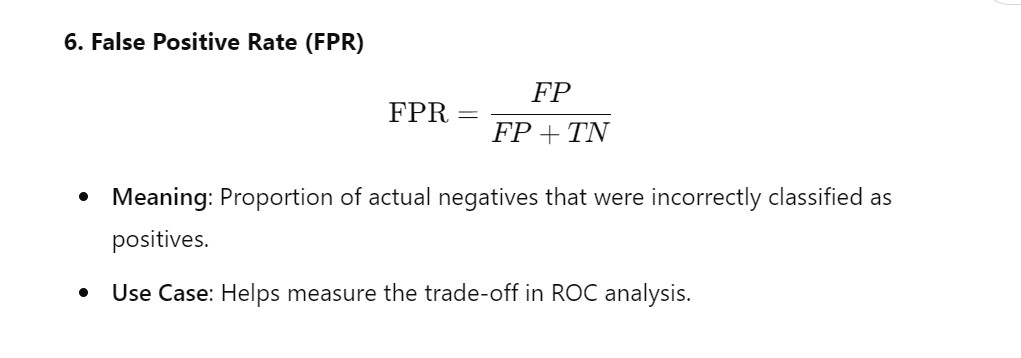

(8) Fall-Out : False Positive Rate (FPR)

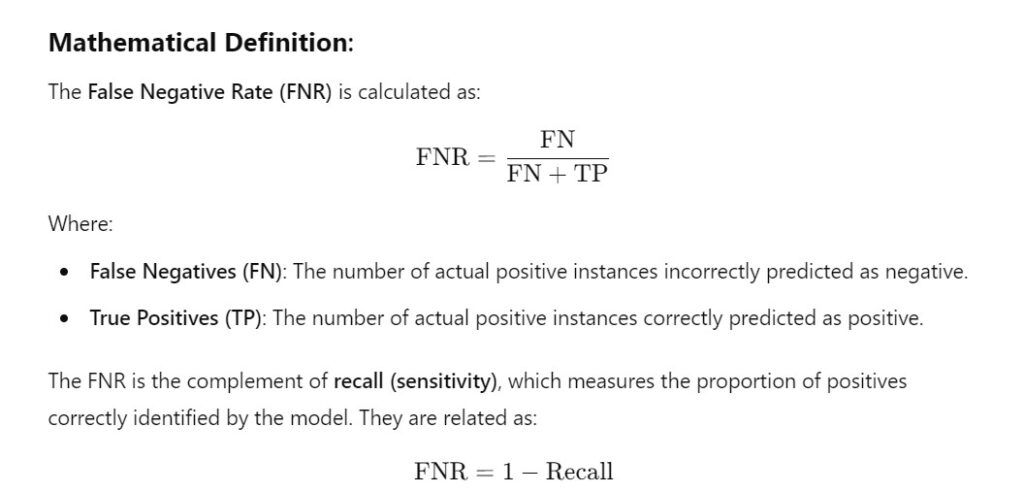

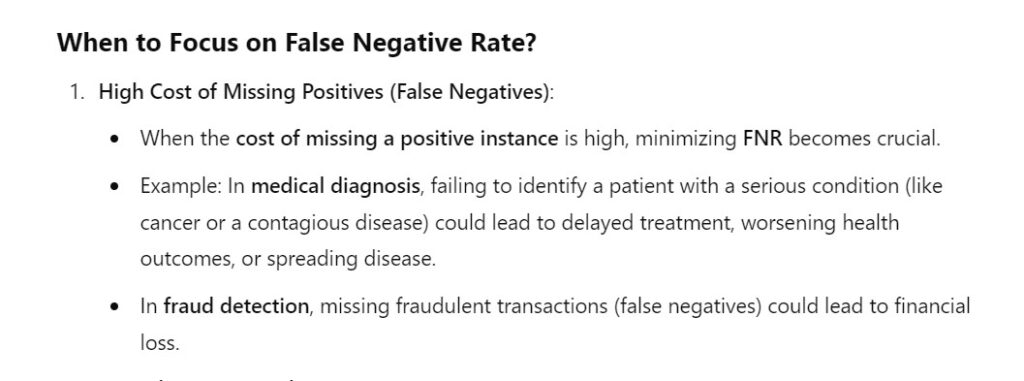

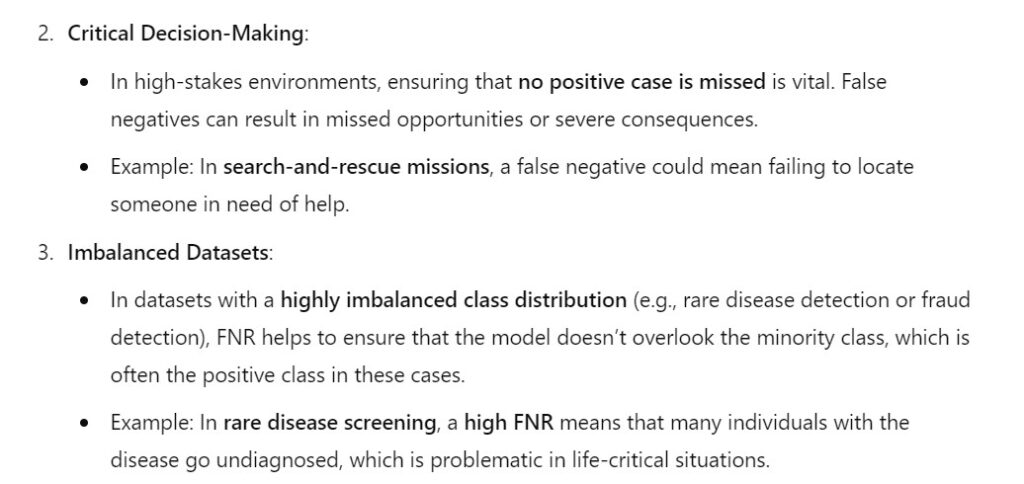

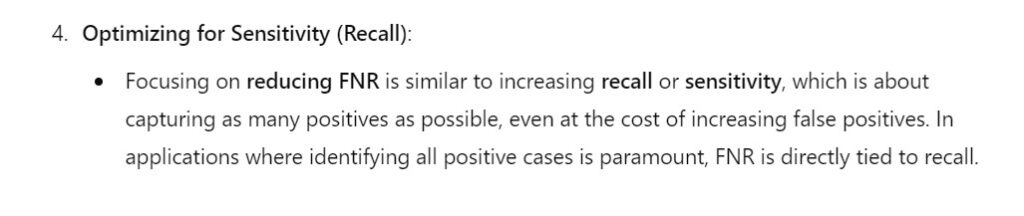

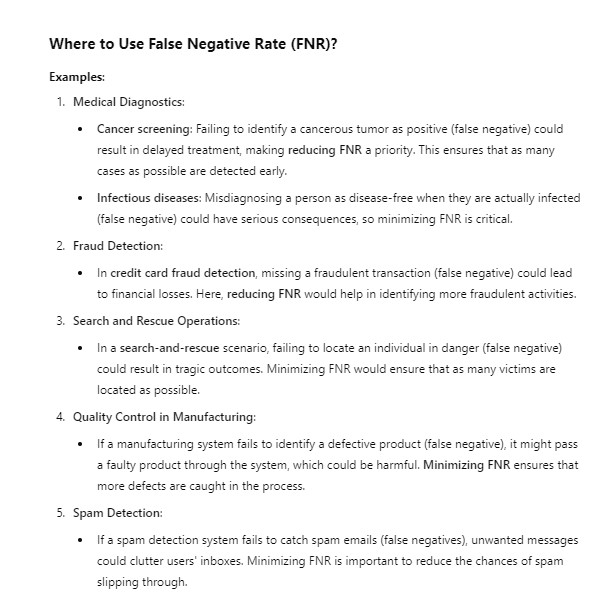

(9) Miss Rate: False Negative Rate (FNR)

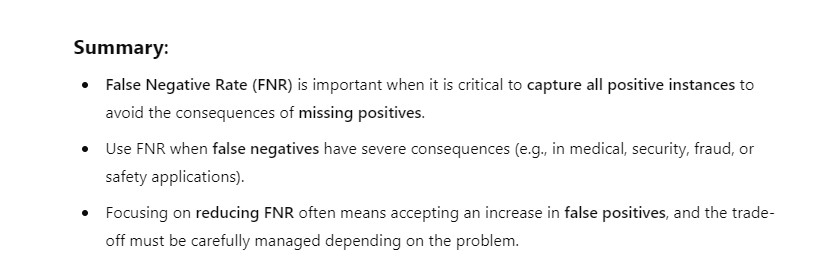

(10) Balanced Accuracy

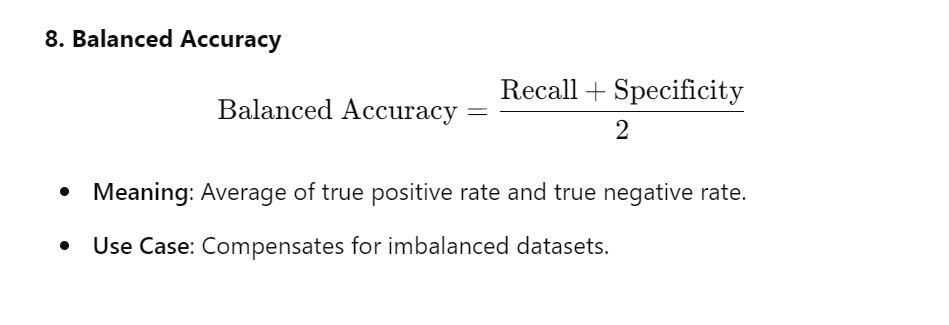

(11) Example Of Confusion Metrics

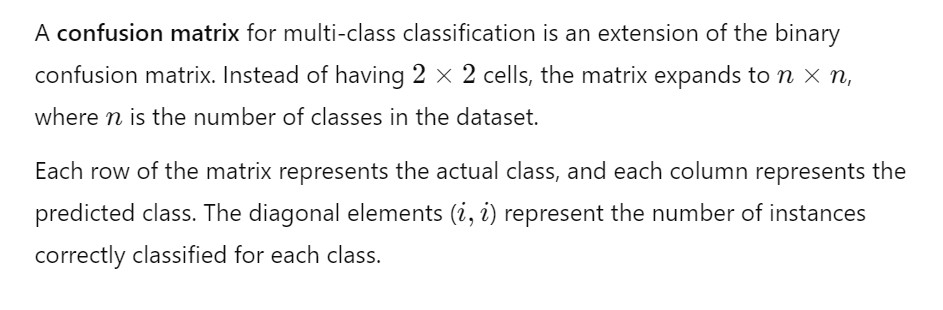

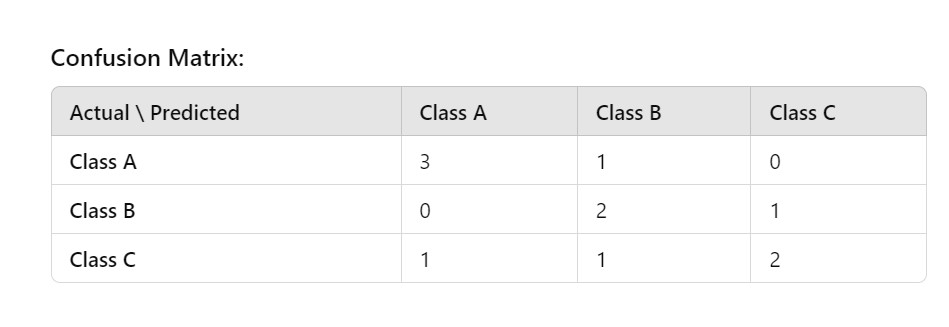

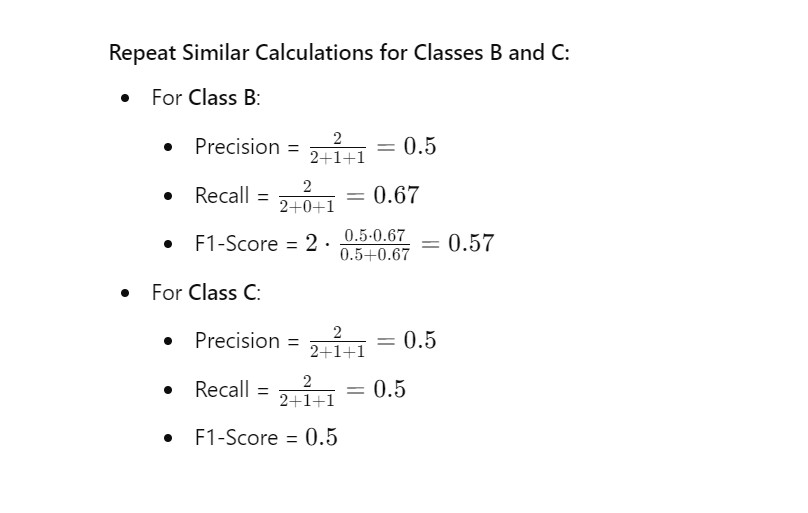

(12) Confusion Metrics For Multiple Class

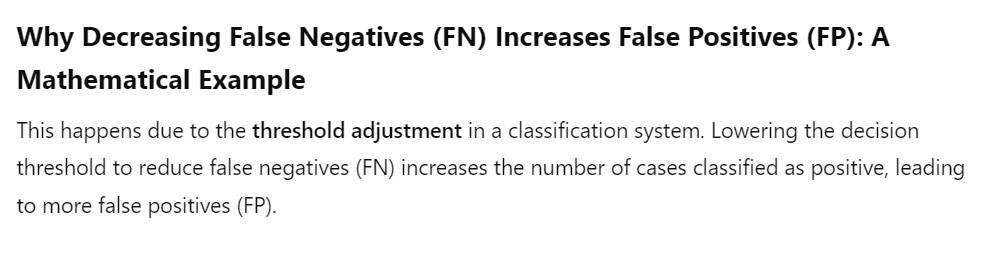

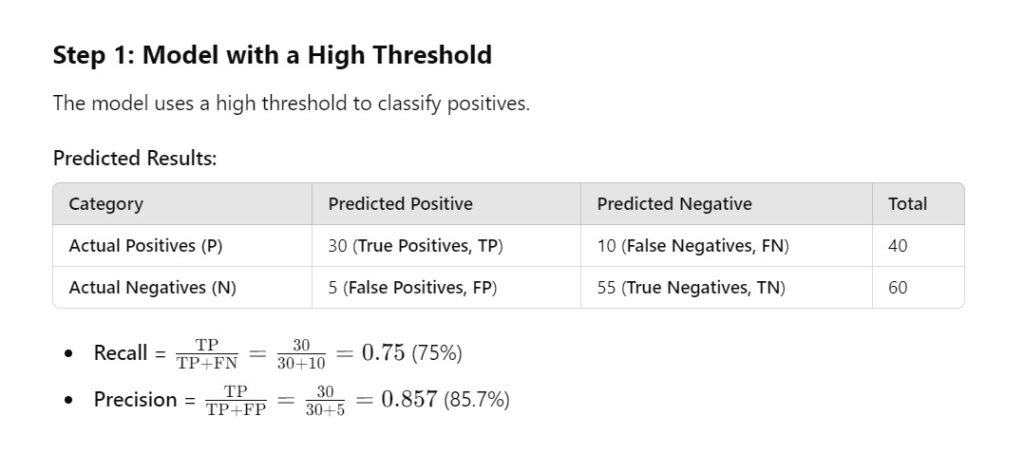

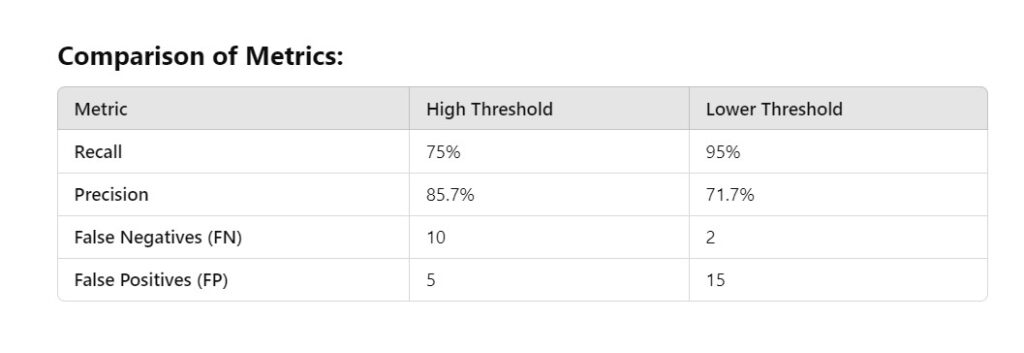

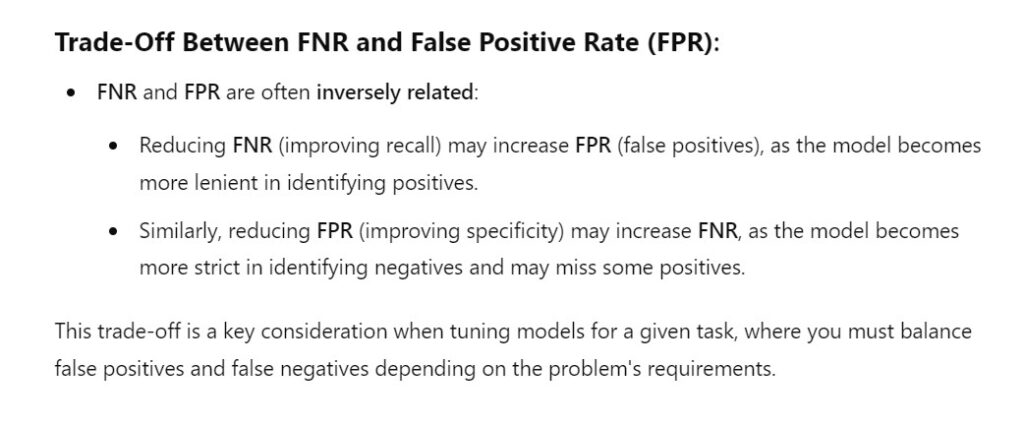

(13) Why Decreasing False Negative Increases False Positive?