What Is Early Stopping ?

Table Of Contents:

- What Is Early Stopping?

- Example To Understand – Classification Use Case.

- Understand The EarlyStopping() Method.

(1) What Is Early Stopping?

- Let’s say you are training a neural network model, you need to mention how many epochs you need to train your model.

- the term “epochs” refers to a single complete pass of the training dataset through the neural network.

- How would you know how many epochs you need to have to train your model perfectly?

- You can say that I will train my model 1000 thousand times and see the result.

- But there is a problem with this approach if you are training your model for a larger number of epochs, your model will overfit the training data.

- It will give you good results in your training data but won’t work for the testing data.

(2) Example To Understand – Classification Usecase

(1) Importing Required Libraries:

import tensorflow as tf

import numpy as np

import pandas as pd

from pylab import rcParams

import matplotlib.pyplot as plt

import warnings

from mlxtend.plotting import plot_decision_regions

from matplotlib.colors import ListedColormap

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dropout

from tensorflow.keras.layers import Dense

from tensorflow.keras.callbacks import EarlyStopping

from sklearn.model_selection import train_test_split

from sklearn.datasets import make_circles

import seaborn as sns(2) Loading Data Set

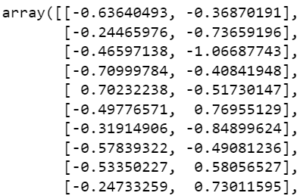

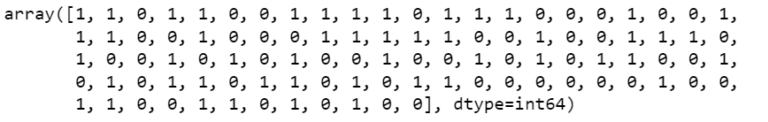

- We will use the make_circles( ) method to create our own dataset of 100 points.

X, y = make_circles(n_samples=100, noise=0.1, random_state=1)

print(X)

print(y)

print(X.shape)

print(y.shape)

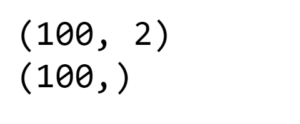

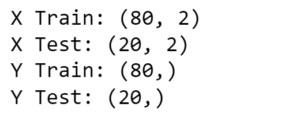

(3) Train Test Split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.2, random_state = 2)print('X Train:', X_train.shape)

print('X Test:', X_test.shape)

print('Y Train:', y_train.shape)

print('Y Test:', y_test.shape)

(4) Model Building

model = Sequential()

model.add(Dense(256, input_dim=2, activation='relu'))

model.add(Dense(1, activation='sigmoid'))(5) Model Compilation

model.compile(loss = "binary_crossentropy", optimizer = "adam", metrics = ['accuracy'])

(6) Model Training

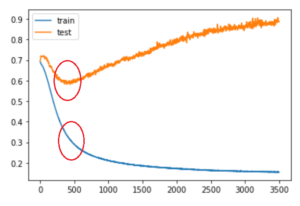

history = model.fit(X_train, y_train, validation_data = (X_test, y_test), epochs = 3500, verbose = 0)(7) Plotting Training Vs Validation Loss

plt.plot(history.histoey['loss'], label='train')

plt.plot(history.history['val_loss'], label = 'test')

plt.legends()

plt.show()

- The blue line is the training loss and the yellow line is the testing loss.

- The X-axis represents the number of epochs and the Y-axis represents the total loss.

- Here you can see that after a particular epoch, the training loss decreases because when you train your model with the same training data multiple times your model learns perfectly from that data.

- This is called overfitting of the model.

- But when you test the model with the validation set your model performance will decrease because of the overfitting issue.

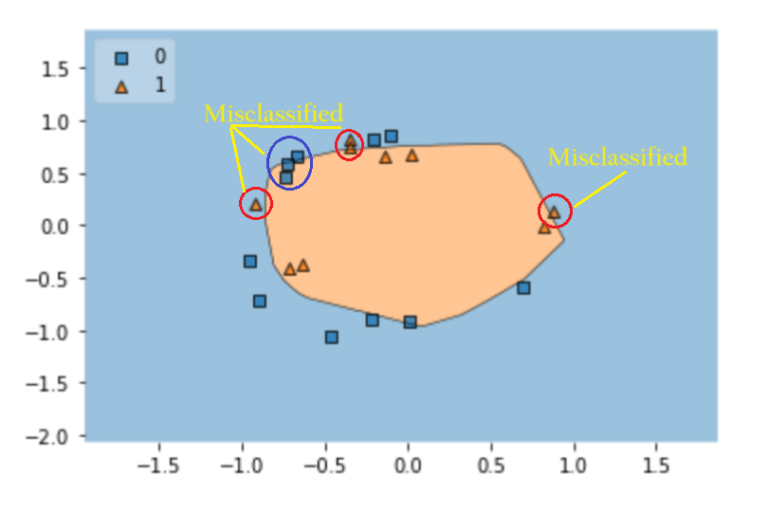

(8) Plot Decision Regions

plt_decision_regions(X_test, Y_test.ravel(), clf = model, legend = 2)

plt.show()

- Here you can see that your model has misclassified some of the data points.

(9) Implementation Early Stopping

model = Sequential()

model.add(Dense(256, input_dim = 2, activation = 'relu'))

model.add(Dense(1, activation = 'sigmoid'))model.compile(loss='binary_crossentropy', optimizer='adam', metrics=['accuracy'])callback = EarlyStopping(

monitor="val_loss",

min_delta=0.00001,

patience=20,

verbose=1,

mode="auto",

baseline=None,

restore_best_weights=False

)history = model.fit(X_train, y_train, validation_data = (X_test, y_test), epochs=3500, callbacks=callback)- In the model fitting stage, you need to call the EarlyStopping function.

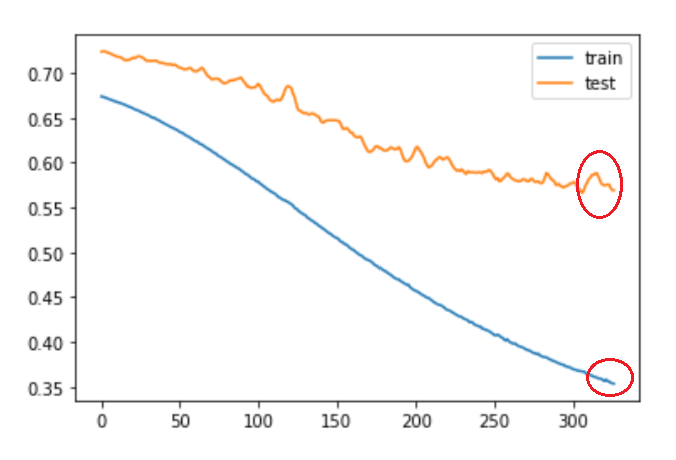

plt.plot(history.history['loss'], label='train')

plt.plot(history.history['val_loss'], label='test')

plt.legends()

plt.show()

- You can see from the above diagram that when our model sees the validation loss increasing it automatically stops the training process.

- Hence our model will not become overfit to the training dataset.

plot_decision_region(X_train, y_train.ravel(), clf=model, legend=2)

- Still, we have a lot of misclassification done by model.

(3) Understand The EarlyStopping() Method

from tensorflow.keras.callbacks import EarlyStoppingkeras.callbacks.EarlyStopping(

monitor="val_loss",

min_delta=0,

patience=0,

verbose=0,

mode="auto",

baseline=None,

restore_best_weights=False,

start_from_epoch=0,

)- Stop training when a monitored metric has stopped improving.

- Assuming the goal of training is to minimize the loss.

- With this, the metric to be monitored would be

'loss', and the mode would be'min'. - A

model.fit()training loop will check at the end of every epoch whether the loss is no longer decreasing, considering themin_deltaandpatienceif applicable. - Once it’s found no longer decreasing,

model.stop_trainingis marked True and the training terminates.

Arguments:

- monitor: Quantity to be monitored. Defaults to

"val_loss". - min_delta: Minimum change in the monitored quantity to qualify as an improvement, i.e. an absolute change of less than min_delta, will count as no improvement. Defaults to

0. - patience: Number of epochs with no improvement after which training will be stopped. Defaults to

0. - verbose: Verbosity mode, 0 or 1. Mode 0 is silent, and mode 1 displays messages when the callback takes an action. Defaults to

0. - mode: One of

{"auto", "min", "max"}. Inminmode, training will stop when the quantity monitored has stopped decreasing; in"max"mode it will stop when the quantity monitored has stopped increasing; in"auto"mode, the direction is automatically inferred from the name of the monitored quantity. Defaults to"auto". - baseline: Baseline value for the monitored quantity. If not

None, training will stop if the model doesn’t show improvement over the baseline. Defaults toNone. - restore_best_weights: Whether to restore model weights from the epoch with the best value of the monitored quantity. If

False, the model weights obtained at the last step of training are used. An epoch will be restored regardless of the performance relative to thebaseline. If no epoch improves onbaseline, training will run forpatienceepochs and restore weights from the best epoch in that set. Defaults toFalse. - start_from_epoch: Number of epochs to wait before starting to monitor improvement. This allows for a warm-up period in which no improvement is expected and thus training will not be stopped. Defaults to

0.