-

Accuracy, Precision, Recall, F1-Score

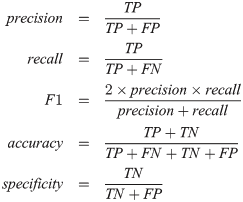

Confusion Metrics, Accuracy, Precision, Recall, F1-Score Table Of Contents: Confusion Metrics Accuracy Precision Recall F1-Score Specificity (True Negative Rate) Fall-Out : False Positive Rate (FPR) Miss Rate: False Negative Rate (FNR) Balanced Accuracy Example Of Confusion Metrics Confusion Metrics For Multiple Class (1) Confusion Metrics A confusion matrix is a tabular representation of the performance of a classification model. It helps visualize and understand how well a model’s predictions align with the actual outcomes, especially in binary and multi-class classification. (2) Structure Of Confusion Metrics Definition: (3) Accuracy For imbalanced dataset Accuracy will give the wrong impression about the model