Convolutional Neural Networks

Syllabus:

1. Fundamentals of CNNs

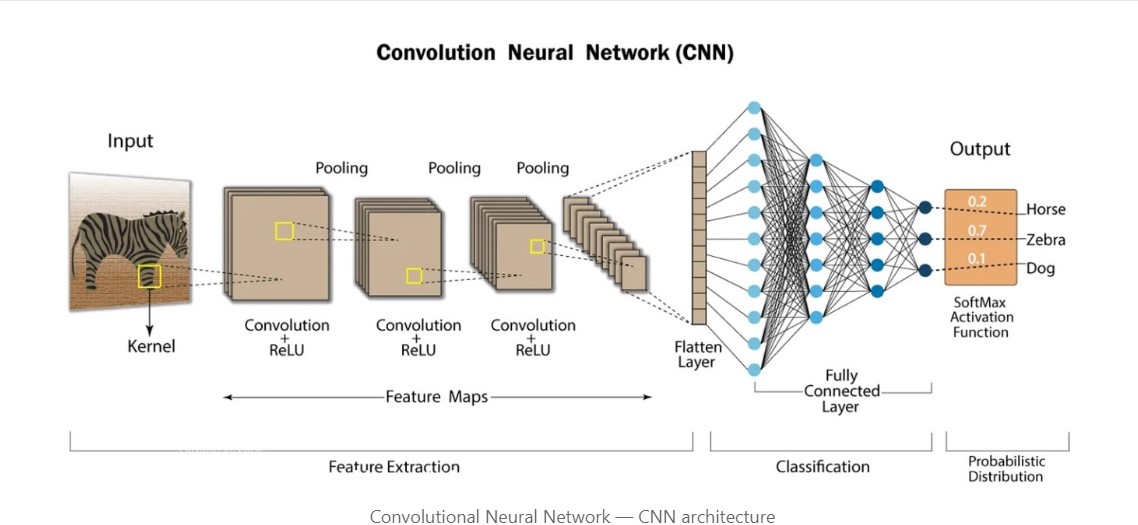

- What are CNNs?

- Differences between CNNs and fully connected networks.

- Applications of CNNs.

- Components of CNNs

- Convolution layers.

- Filters (Kernels) and their role.

- Stride, Padding, and their effects.

- Activation Functions

- ReLU, Sigmoid, Tanh, etc.

- Pooling Layers

- Max pooling, Average pooling, Global pooling.

- Fully Connected Layers

- How they integrate features learned from convolutional layers.

2. Advanced Architectures and Concepts

- Popular CNN Architectures

- LeNet, AlexNet, VGG, ResNet, Inception, MobileNet, EfficientNet.

- Residual Networks (ResNets)

- Skip connections and their significance.

- Inception Networks

- Factorized convolutions and mixed architecture.

- Depthwise Separable Convolutions

- Efficiency in MobileNet and EfficientNet.

- Dilated (Atrous) Convolutions

- For increasing receptive fields without losing resolution.

- Group Convolutions

- In architectures like ResNeXt.

3. Techniques to Improve Performance

- Batch Normalization

- Reducing internal covariate shift.

- Dropout

- Preventing overfitting.

- Data Augmentation

- Random cropping, flipping, rotation, etc.

- Transfer Learning

- Pretrained models and fine-tuning.

- Learning Rate Scheduling

- Warm restarts, step decay, etc.

4. Training CNNs

- Loss Functions

- Cross-entropy, Mean Squared Error (MSE), etc.

- Optimization Algorithms

- SGD, Adam, RMSprop, etc.

- Weight Initialization Techniques

- Xavier, He initialization.

- Overfitting and Underfitting

- Techniques to mitigate both.

5. Applications and Use Cases

- Image Classification

- Multi-class, binary, and multi-label classification.

- Object Detection

- YOLO, SSD, Faster R-CNN.

- Semantic Segmentation

- Fully Convolutional Networks (FCNs), U-Net.

- Image Generation

- GANs and Variational Autoencoders (VAEs).

- Super-resolution and Image Enhancement

6. Specialized Topics

- Attention Mechanisms in CNNs

- Self-attention, Squeeze-and-Excitation Networks.

- Capsule Networks

- Handling spatial hierarchies better than traditional CNNs.

- 3D Convolutions

- For video and volumetric data processing.

- Recurrent Convolutional Networks

- Combining CNNs and RNNs for sequence data.

7. Practical Tools and Libraries

- Deep Learning Frameworks

- TensorFlow/Keras, PyTorch.

- Visualization Tools

- TensorBoard, Grad-CAM.

- Deployment

- ONNX, TensorRT, CoreML.

8. Real-World Challenges

- Data Imbalance

- Techniques like SMOTE, oversampling.

- Computational Efficiency

- Quantization, pruning, knowledge distillation.

- Explainability

- Interpreting CNN decisions (e.g., Grad-CAM).